Reference:Device Health Check

Applies To

This information applies to

- all innovaphone devices

More Information

This article advises how to perform a quick health check on an innovaphone device. This may serve as guidance for routine checking of a PBX systems state as well as to start debugging when a device malfunctions.

Overview

To perform a device health check, the following steps are recommended

- Inspect the device alarm table

- Inspect the device event list

- Inspect performance counters

- Check volatile memory (RAM) usage

- Check CPU usage

- Check persistent memory (Flash) usage

- Check CF card usage

- Examine system log

- Check for efficient PBX configuration

Depending on the device type, some of the steps may or may not apply.

The Alarm Table

The systems alarm table (Maintenance/Diagnostics/Alarms) should always be empty. If there are entries in it, examine them carefully and fix the problem so that the alarms disappears. The reason for this is pretty simple: if the alarm table is filled with entries that you already have checked but considered acceptable, then it will not take long until a severe problem will hide itself in between the harmless entries. So make it a habit that your alarm table is empty always.

The alarms have a details page available in the Code columns. These sometimes show useful further information. Also, many of the error codes have a dedicated wiki help page, available through the details page Help button.

The Event List

The systems events list available under Administration/Diagnostics/Events may contain a fair amount of entry types. As opposed to the alarm table, where the entry is removed when the problem is fixed, entries in the event list are not removed (except the list exceeds its allowable size in which case the oldest entries will be removed). As a result, your event list will likely rarely be empty. You need to work through the list and analyse each entry to determine if or if not it is still relevant. To avoid analysing entries again and again, you can clear the whole list when finished, or (from V8 on), you can declare it as already taken care of using the Mark button in the detail page (which will render the entries Code column in green instead of red).

As for alarms, it is important to take care of problems which frequently create entries in the event list, as otherwise you will most likely overlook severe problems in a crowded event list.

The Calls Screen

One important issue is consistently good voice quality. In a life system, you can do a quick check on voice quality simply by looking at the PBX's Administration/PBX/Calls screen.

Number Name Protocol Media Dir Number Name Protocol Media Uptime State 13 abc H323EFC:TRANSIT G711A (2,0,0) x >> 001730 H323EFC:TRANSIT G711A (2,0,0) x 0d 0h 29m 31s Connected SRTP 0017200 H323EFC:TRANSIT G711A (46,5,3) >> 37 cba H323EFC:TRANSIT G711A (39,8,29) 0d 0h 7m 2s Connected

Have an eye on the values within round parentheses in the Media column, most notably the third packet loss value. These should be low, more than 5/minute for packet loss e.g. is a good reason to be worried (so in our example, the second call is still acceptable but on the lower end of satisfaction). More information about the call screen can be found in How to read the call screen.

Recent Call Data

While the calls screen is a good tool to see how your calls feel like right now, they do not allow you to review data for terminated calls. A rule of thumb however says that everything is fine as long as you look at it, but gets worse as soon as your end customers are left alone with the system. A nice tool to get at past data easily is the internal Administration/Gateway/CDR0 CDR logging. You can save call detail records on your local CF card (using the LOCAL Log Server Type which will save them to /DRIVE/CF0/log/CDR*.txt. You can open these files with any text editor and although they look a bit strange, you can search straight for entries like &xcoder=G711A,30(0,10,0)&rcoder=G711A,30(0,0,0). The values in parentheses have the same meaning as in the PBX's calls screen.

The Performance Counters

The systems performance counters available under Maintenance/Diagnostics/Counters are another valuable tool to determine its health status. These are graphs which show the status of certain resource over the last 24 hours (8 hours are shown, you can scroll through time using the arrow buttons). Each individual value on the x-axis is a 2-minutes average. Lets have look at the resource counters currently available:

CPU

This counter shows the total CPU usage. A system that runs near to 100% all the time is a candidate for unpleasant behaviour, obviously. However, running on 100% simply says that things are getting slow. It does not necessarily say that things don't work. But as a rule of life, demand is always increasing, not decreasing, so there are two options in such a situation:

- be prepared for an upgrade

- eliminate the reason for excessive CPU usage

We will discuss in a later section of this article how to determine who the CPU hog is.

We generally recommend to make sure CPU load is not more than two thirds in average. This of course is just a rule of thumb. You may well run with a system with higher load or you may experience problems with a system with lower load. Still, if your system continuously runs with more than 2/3 of its CPU power, you should ask yourself if this is expected behaviour.

Also, watch out for unusual peaks. When you have a system that has a healthy looking CPU load graph, see if there are perhaps thin peaks. These would indicate short time frames with high load. They do not look impressive in the graph, still they may create severely bad user experience. Suppose for example there is a CPU usage pattern which creates 100% CPU load for a minute every once in a while. This would look like a comfortable 50% value in the 2-minutes graph slice. The end user though may experience service outages, signalling time-outs, frustrating response times etc. during this period of time. Such patterns usually look like a sharp needle in the graph and you should pay attention if you observe such.

CPU-R

This counter shows the amount of reserved CPU time. As opposed to the CPU counter, this is not CPU load that actually is used, but CPU performance which needs to be reserved for real-time application. Sending/receiving RTP data is considered real-time, for example. As opposed to, say HTTP access, where missing CPU performance merely makes things running slower, missing CPU performance for transmission of RTP data results in voice drop-outs and thus is considered a failure. This is why calls are rejected right away when no CPU performance can be reserved. When your CPU-R counter runs near to 100% often, then your system is overloaded and needs to be upgraded. Note that real-time apps do not suffer from 100% CPU values though, as the innovaphone operating system features a very efficient real-time prioritization.

You can take a look over the used mips. With this command you get the exact information of the used mips in this moment. You can also use the CPU-R counter and move the cursor over the diagram. This method is a little bit inexact. This command is much faster than the CPU-R counter. The counter has a 5 Sec delay.

ip-PBX/!mod cmd CPU mips-usage

MEM

This counter shows the total volatile memory (RAM) usage. Memory usage is in fact more critical than the CPU usage, as a system with low or no CPU resources left will still run, albeit slowly. A system with no memory resources will stop working and re-boot instead! It is thus crucial to have an eye on memory usage. You may observe that the memory usage graph usually is flat, that is, the value never decreases and rarely increases, especially once the device ran for a while after a re-boot. This is because the memory allocation strategy eventually claims memory for a specific purpose if needed, but never de-allocates it further on. Instead, objects allocated but no longer used are marked as free and re-used when an object of the same type is needed later on. The memory allocation graph shows all allocated objects, including those which are used and those which are currently free (as they are not available for use by objects of other types).

If memory usage grows steadily, there is most likely a memory leak somehow. That is, a function in the device allocates objects and thus claims memory but fails to mark those objects as free when done. If this happens, each function invocation will result in lost memory and the only way to recover from this situation is to re-boot the system. We will discuss how to track down memory hogs in a later section.

PRIx / BRIx

These counters show the number of B-channels used on the respective ISDN interfaces. Its interpretation should be fairly straight forward.

Finding a CPU Hog

In the event that you observe excessive CPU load, you need to find out which function actually consumes it. Once you have done so, you can either try to re-configure the system so that the causing function is used less, or you can add an extra device and move this function to the new device. For example, if you find that your PBX runs out of CPU resources because of heavy load on the system's CF-card, you may want to move the CF card to a different device which has more CPU cycles left.

Unfortunately, you cannot determine which function takes your CPU cycles away easily. However, you can determine which module does. In our previous example, you won't be able to determine that it's excessive config save operations on the CF card that produces the load, but you will be able to determine that its the CF card that does.

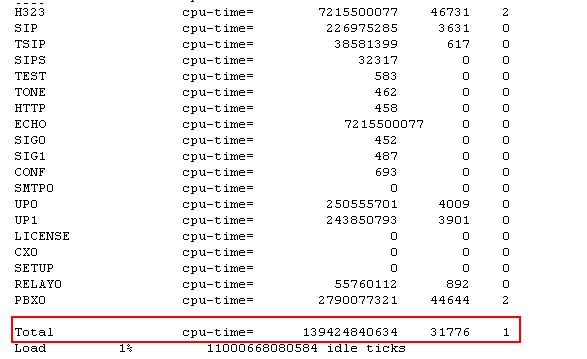

A module is a piece of code in the devices firmware that performs a certain task. You can think of it as a thread in an operating system such as Windows or Linux. Modules are named and you can retrieve a list of modules currently running on your device using the !mod command. Assuming your device has the IP address 172.16.0.10, you would open http://172.16.0.10/!mod with your browser. You will receive a list of modules such as this one:

In this table, the first column (modules) and the second column (ticks) are of interest. The second column shows the number of ticks used by the module since the counters were reset the last time. The system never resets the counters on its own. They will be reset if you add the clr option to the mod command (http://172.16.0.10/!mod clr). A tick by the way corresponds to some clock-tick found in the devices CPU (platform specific), so be prepared for large values.

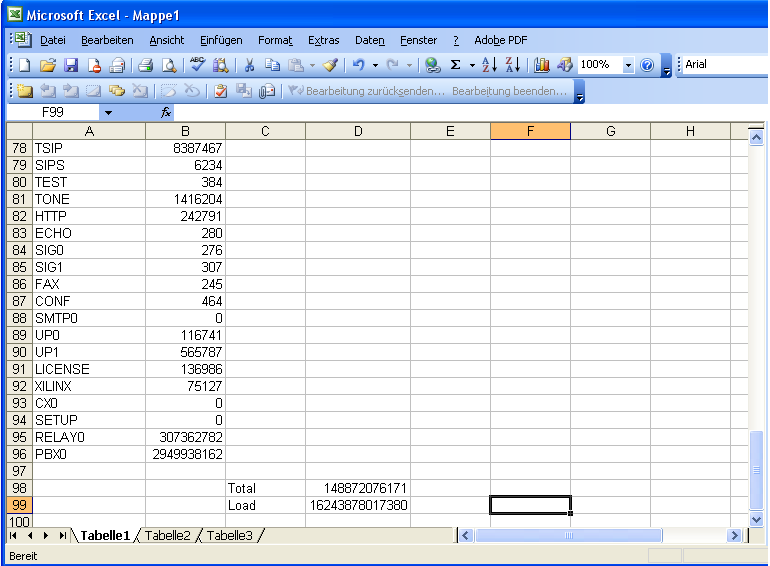

For easier analysis, you may want to import this table into your favourite spread sheet application. In Microsoft's Excel (c) for example, you would highlight the whole table, copy it in to your copy&paste buffer, then use Inhalte einfügen / Paste Content with Quelle: Text / Source: Text so you get each column in a separate Excel column (you may need to use the Import Assistant then, later V8 and V9 firmware builds output a more excel friendly table format). After some cleanup (remove unused columns), your spread sheet should then look something like this:

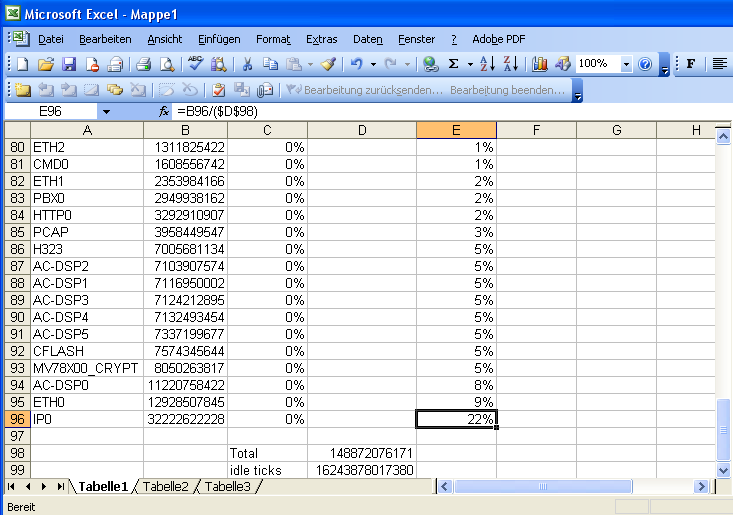

You can then add a column (column D in our example) which relates the number of ticks used by a module to the total number of ticks used (=B96/($D$98)). This is the relative load imposed by a certain module. Finally, sort the table by this column and you get a list of CPU hog candidates:

Unfortunately, there is no list of which module has which name, but a look at the module name will give a good indication of what it is. The typical scenario is that IP0 (the IP stack), ETHx (the ethernet drivers) and H323 (the H.323 signalling stack) will be on top. If the device is a gateway too, the DSP drivers (AC-DSPx) and SRTP encryption drivers (MV78X00_CRYPT) will be in the top 10 too. In our example, you can see that the compact flash driver (CFLASH) is also prominent. Compared to that, the PBX itself - accounting for 2% of the cycles consumed - is a side-note only in our example.

Be aware that these figures are counted from the last counter reset. To identify the source of a CPU resource problem, you would wait for the situation to happen (i.e. you would wait for a time the CPU usage is high) and then do the math. However, you should start the analysis by resetting the counters (!mod clr), then wait a significant amount of time (half a minute may be a good starter), then get the stats (!mod) and analyse. If you fail to reset the counters, your picture will be distorted as it is influenced by previous (and probably unknown) activity.

Finding a DRAM Memory Hog

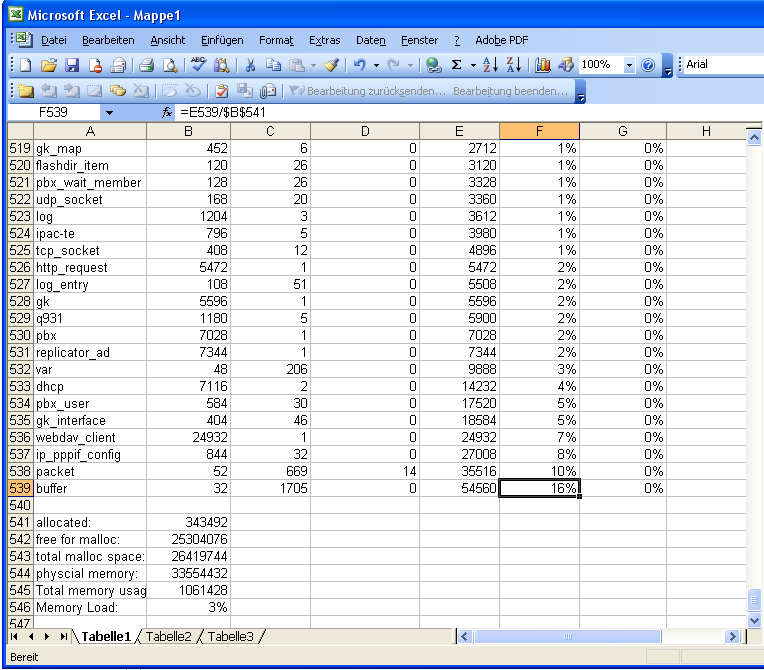

The strategy to find a dram memory hog is pretty much similar to the one to find the CPU hog. However, rather than using the !mod command, you would use the !mem (http://172.16.0.10/!mem) command. The output will look something like this (again, later builds have somewhat different layout, so excel import will be easier):

Total Memory Usage = 1061428 name size used free usage client_gui_button 64 0 0 0 client_gui_text 64 0 0 0 client_gui_break 60 0 0 0 client_gui_ruler 60 0 0 0 client_gui_list_element 60 0 0 0 client_gui_list 64 0 0 0 client_gui_table_cell 60 0 0 0 client_gui_table_row 60 0 0 0 client_gui_table 60 0 0 0

The table has a line for each type of internal object (e.g. client_gui_button). The size column gives the size of a single instance in byte, the used and free columns the number of allocated objects which are still in use or free for re-use again, while usage is size * (used + free). So clearly, the usage column is the amount of bytes what you are interested in. Again, we will do some spread-sheet math.

In our example, we relate the values in the usage column to the total allocatable memory size (column G) and the currently allocated memory (column F). The table is then sorted by column F, resulting in a list of memory using objects.

As for the modules, there is no which object type has which name list, but looking at the object names will give some indication on what kind of object it is and which function it may be related to. The screen-shot was taken from an idle system, this is why column G is all zero. In a life system, the result may look more like this:

Name Size Used Free Sum % of allocated %of available cmd_exec 376 36 74 41360 1% 0% flashdir_item 120 418 0 50160 1% 0% pbx_connector 508 67 47 57912 2% 0% h323_call 552 67 46 62376 2% 0% gk_map 448 176 1 79296 2% 0% tcp_socket 408 150 176 133008 4% 0% pbx_user 584 270 0 157680 4% 0% packet 52 4997 488 285220 8% 0% http_request 5472 33 75 590976 17% 0% buffer 32 10437 8255 598144 17% 0% ac_491 122648 6 0 735888 21% 0%

As a rule of thumb, many systems tend to run out of CPU more likely than out of DRAM memory.

packet and buffer are special, as they do not relate to a specific function. Whenever the system needs temporary, short lived memory - e.g. to carry a network packet - it will allocate a packet that carries one or more buffers. Peak network load thus will allocate a huge amount of packets and buffers which might not be used again but still consume memory. When the number of packets or buffers increases over time, this is most likely an indication of a memory leak.

The bottom of the memory table includes a number of lines as follows:

allocated: 1466608 free for malloc: 58580 total malloc space: 3149356 physcial memory: 8388608 Total memory usage: 3090776 Memory Load: 36%

The allocated: line is the number of allocated objects. That is, the sum of the usage column. Please note that this include all free objects of a specific type, which can be used for allocation of new objects of this type.

The free for malloc: line is the amount of memory which can be allocated from. This will be used whenever an object is needed for which there is no previously allocated but now unused object space (as shown in the free column).

The total malloc space: is the value of free for malloc: when the system has booted.

The Total memory usage: is the memory already claimed from the malloc space (that is total malloc space: minus free for malloc). It does not include static memory use such as decompressed firmware, stack, etc.

The Memory Load: finally relates the Total memory usage: to the physical memory:. Please note though that the box will run out of memory as soon as free for malloc goes down to zero! It might be useful thus to relate free for malloc: to total malloc space:, which is exactly what the MEM counter in Administration/Diagnostics displays.

Obtaining more Information about used Objects

Sometimes, object types - even though their meaning is pretty clear - cannot be related to functions easily. For example, if you see an entry like

tcp_socket 376 15 64 29704

it tells you that a number of sockets are used, but not what for. To see details of an object, some object types support the mem-info method. You would look at the detail properties of the objects of a certain type using e.g. http//172.16.0.10/!mem info tcp_socket (http//172.16.0.10/!mem info tcp_socket). The result list

0(c0e09a0c): NAT_LISTEN: ports=0:0, state=0:INIT, ostate=5680, x-q=0, r-q=0 1(c0e1073c): PCAP_SOCK_LISTEN: ports=2002:0, state=0:INIT, ostate=0, x-q=0, r-q=0 2(c0e17174): HTTP_LISTEN: ports=80:0, state=0:INIT, ostate=0, x-q=0, r-q=0 3(c0e173d8): HTTPS_LISTEN: ports=443:0, state=0:INIT, ostate=23130, x-q=0, r-q=0 4(c0e181b8): T_USER: ports=23:0, state=0:INIT, ostate=0, x-q=0, r-q=0 5(c0e1fcd8): LSRV_SOCK_LISTEN: ports=389:0, state=0:INIT, ostate=0, x-q=0, r-q=0 6(c0e718f4): GK-LISTEN: ports=2049:0, state=0:INIT, ostate=-16053, x-q=0, r-q=0 7(c0e71b54): GK-LISTEN: ports=2050:0, state=0:INIT, ostate=0, x-q=0, r-q=0 8(c0e71e3c): GK-LISTEN: ports=2051:0, state=0:INIT, ostate=0, x-q=0, r-q=0 9(c0e72310): GK-LISTEN: ports=2052:0, state=0:INIT, ostate=0, x-q=0, r-q=0 10(c0e725f8): GK-LISTEN: ports=2053:0, state=0:INIT, ostate=1950, x-q=0, r-q=0 11(c0e728e0): GK-LISTEN: ports=2054:0, state=0:INIT, ostate=-16148, x-q=0, r-q=0 12(c0e72bc8): H323_LISTEN: ports=1720:0, state=0:INIT, ostate=-16027, x-q=0, r-q=0

may include some useful hints. This is particularly useful for the packet data structure, as it is used by all classes: http//172.16.0.10/!mem info packet indicates the packet creator module:

5717(c1d1d0b8): ETHIF,c0078b38 len=31 5719(c18197fc): H323_OUT,c0078b38 len=31 5725(c1cfb864): H323_ACCEPT,c0078b38 len=31 5728(c1d04670): ETHIF,c0078b38 len=31 5729(c1d56ac8): HTTP4_REQUEST,c00e228c len=113 5730(c1f09518): HTTP_SOCKET,c0078b38 len=240

If there is a memory leak, the module causing it can easily be spotted.

Monitoring what's going on

The !mem info approach described above can also be used quite independently of memory leaks to get a real-time overview of what is going on in the system.

Let us assume you need to know how many myApps clients are connected to the system

- the first step would be to issue a

!memcommand to get the complete list of memory objects - now for the creative part: you need to guess the name of the object you are interested in. In our case, it is rather simple. We are looking for a client, so let's see which objects have an client as part of their name (and also have a value greater than 0 in the used column). What we find is

pbx_appclient_dialog_call 84 204 463 56028 pbx_appclient_dialog 76 1596 189 135660 pbx_appclient_presence 84 5050 425 459900 pbx_appclient_standby_monitor 72 0 0 0 pbx_appclient_login_context 44 0 2 88 pbx_appclient_session 264 176 10 49104

- this looks promising, pbx_appclient_session could be our friend. So we try <cmd>!mem info pbx_appclient_session and here you go:

155(84ce4e50): (Christoph Künkel) addr=172.16.68.10 secure=1 session=f64c7b8c57226001077f009033500068 ua=myApps for Windows deleting=0 closed=0

Note though that

- objects may or may not have the info method implemented. If not, you will see a useless list of memory addresses only

- support for the method and format of its output may change with builds

- all the !mem command variations are debug tools and not recommended for regular use (for example, you should not create a tool or app based on them)

Buffers

So far we have talked about objects in the operating system. However, there are also other users of dynamically allocated memory, the so-called buffers. These are used for network packets for example. You can use http://172.16.0.10/!buf to get a list of used buffers.

pool used free

0: 32 4129 2573 375312

1: 64 319 652 85448

2: 128 154 218 56544

3: 256 188 85 76440

4: 512 155 167 172592

5: 1024 24 6 31440

6: 2048 0 1 2072

7: 4096 120 4 510880

8: 8192 2 4 49296

1360024

The second column shows the buffer size (buffers are always allocated in multiple-of-2 sizes). To see who has allocated the buffers, you can issue http://172.16.0.10/!buf info size where size is the one of the valid buffer sizes (32 to 8192).

http://172.16.0.10/!buf info 8192 15c6e80: c=HTTP4_REQUEST(114988) 15c4e60: c=HTTP_GET(19838c) 15e8650: c=HTTP_GET(1983a0)

This example shows that 3 buffers of size 8192 are used and all are somehow related to HTTP GET requests.

Finding a Flash Memory Hog

Unfortunately, there is no counter graph for flash memory usage. Still, there is a statistics page as for CPU and DRAM memory. It is retrieved using the !mod cmd FLASHMAN0 info command (http://172.16.3.63/!mod cmd FLASHMAN0 info):

bottom 0xfe020000 base 0xfe020000 top 0xfffc0000 segsize 0x20000 segments 253 LDAP - used 128k avail 92k owned 1152k (max 3200k) VARS - used 67k avail 95k owned 256k (max 256k) 0 0xfe020000 free(0x00) owner MINI(0x09) magic 0x666d order 0x00020031 usage 0x00000001 ... 7 0xfe100000 free(0x00) owner FIRM(0x08) magic 0x666d order 0x0002002f usage 0x00000001 ... 226 0xffc60000 free(0xff) owner 255 (0xff) magic 0xffff order 0xffffffff usage 0xffffffff ... 230 0xffce0000 used(0x80) owner VARS(0x06) magic 0x666d order 0x00000008 usage 0x00000001 231 0xffd00000 used(0x80) owner LDAP(0x04) magic 0x666d order 0x00000009 usage 0x00000001

This table shows all allocated flash memory segments. Users include:

- MINI

- the mini (rescue) firmware

- FIRM

- the real firmware

- VARS

- the storage for non-volatile configuration parameters

- LDAP

- the directory storage (holds the PBX object configuration as well as call lists and personal user directories)

Each flash memory user has a fixed maximum quota. In our example, LDAP has set a maximum of 3200kB of which 1152kB are already claimed. In the current active flash segment (that is, the segment which is used to satisfy subsequent memory requests), 92kB are left for allocation. For the VARS flash user, all possible segments are claimed already (256kB of 256kB) and 95kB are left for allocation.

Wasting Memory by deleting Objects

When PBX objects are deleted, the GUID is kept in flash memory for a while with a note that the object has been deleted. This looks something like mod cmd FLASHDIR0 add-item 101 (cn=object that will be deleted soon)(isDeleted=true)(guid;bin=3886C5ABE909D3119475009033290009)(usn=18243). Note that this sample entry still takes approximately 110 bytes flash memory. If you experience lack of flash memory and see lots of such entries, you might want to save the devices config, remove the lines with (isDeleted=true) and re-load the config to get rid of these entries. This typically happens when you turn on a new replication for a box that had a large PBX configuration before. These now obsolete PBX entries will be deleted from the configuration when the replication takes place.

Finding a Compact Flash Hog

Compact flash card space can easily be examined using the Info button available in Administration/Diagnostics/CF (up to V8) or Reference9:General/Compact-Flash/General (from V9) . The CF card's content can be examined using the Browse CF Content link on the same page.

Using Syslog

Many failures are listed in the Alarms and Events sections, as discussed above. However, some are not. It is a good habit therefore to take transcript of the systems log messages and browse through them in search of intermittent problems. This can easily be done by storing the syslog on the local CF card (as defined on Configuration/General/Logging ). It is important to tick the check marks on Administration/Diagnostics/Logging in a sensible way in order not to flood the log with useless messages. As a starter, the following settings are recommended:

| Category | recommended setting | |

| TCP | off | |

| PPP | off | |

| PBX Calls | on | |

| Gateway Calls | on | |

| Gateway Routing | off | |

| H.323 Registrations | on | |

| SIP/UDP Registrations | off, unless you use SIP endpoints | |

| SIP/TCP Registrations | off, unless you use SIP endpoints | |

| SIP/TLS Registrations | off, unless you use SIP endpoints | |

| H.323-NAT | off | |

| Administration | off | |

| TELx/PRIx/PPP | off, unless you use this interface |

Please note that Administration might be a recommended setting if documentation of changes is an issue to you.

Resource sensitive PBX Configuration

Very often, resource issues are caused by inappropriate PBX configuration. Although there are of course a huge number of possibilities to do it wrong, here are a few prominent and easy to avoid things to keep in mind:

- do not use long identifiers. All PBX identifiers (user names, group names, ...) are stored in clear text in the PBX LDAP directory. Longer names use more flash space thus. If you deal with a 100 user PBX, you probably don't care. However, if you have 10.000 users structured in groups with a huge amount of members per group, calling a group

ctiinstead ofUsers with CTI Integrationdoes make a difference! - do not put objects in to groups they do not need to belong to. You may be tempted to add users to certain groups by default, thinking that it will make your life easier later on if the group assignment is in fact needed. However, group assignments not only consume flash space in the PBX's LDAP directory, but also consume in-core memory during run-time. Even more, doing so will make it more probably to exceed the maximum size of a single group (which is 2000 members currently). Having more than the allowed maximum of group members in a single group will result in strange behaviour which is difficult to track down.

- do not put objects as active members in to groups when they don't need to be active. Active group memberships consume additional DRAM memory as well as additional CPU cycles during run-time.

- do not configure group indications when they are not needed. Group indications will result in additional memory and CPU consumption as well as network traffic. When group indications are configured, information is distributed to the respective group members regardless of if it is used there or not. So if no group indication is required, don't send it! If it is required, use the smallest group available to send them to. Do not re-use a group for this purpose just because it exists if it includes more members than required for the group indications. Rather create a new group and restrict it to the members required. One particular bad habit is to put all users in a single group, have them all be active and configure this group as the group used for group indications for all users. While this has the beauty that PBX functions which require group indications will always work (which to configure correctly may be a bit tedious sometimes), but it has the downside that each call to any of these users suffers from increased CPU and network load with each added user!

- do not create objects that aren't needed. Each PBX object consumes flash and DRAM memory, even if it is never used.

- do not put objects in to the group used by the TAPI service provider which are never monitored anyway. All activity information for all users in the tapi group is conveyed by the PBX to the TAPI service provider. If it is never used, this is a waste of resources and adds load both to the PBX and to the machine running the TSP.

Monitoring a multi-Device System

If your system consists of more than one relevant device, you may want to configure Alarm, Event and Syslog forwarding (as configured in Configuration/General/Logging ) so you have this information in a single place to review.

Monitoring the Linux Application Platform (LAP)

To see the LAP's health status, first have a look at the Diagnostics/Status page.